Did you hear about Google BERT?

Google recently announced the biggest improvement for Google Search done so far: Google BERT update.

What does BERT stand for? Well, the answer will not be easy to understand:

BERT stands for Bi-directional Encoder Representation from Transformers… What does this mean?

Before going deeper into the technical explanation, BERT represents the enhanced way that Google uses to understand the search queries. An advanced machine learning algorithm that aims to better understand the queries and the content of a web page following a human-like approach.

Any Impacts? Yes

- Of course, a huge enhancement for Google searchers.

- Google Translate enhancements.

- Search Engine Optimization (SEO) potential changes which concern a lot of SEO specialists and website owners.

Per Danny Sullivan, “BERT doesn’t assign values to pages. It’s just a way for us to better understand language.“

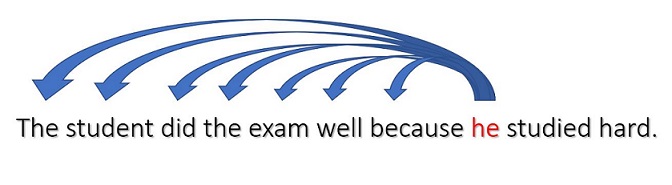

It seems to be early to be able to identify the circumstances of the new update but from what we got from Google so far; the change is not targeting the pages ranks but it is rather a step further to a better understanding of words in CONTEXT. To simplify the complexity of Machine Learning Networking, let me provide a simple example:

The above example shows a Sequential processing learning approach which makes it difficult to learn long term dependencies. The word learns from previous ones only. What does He in the above example refers to? For you, it is simple to guess that it refers to Student. But for an algorithm or a learning model, it is a hard task to handle.

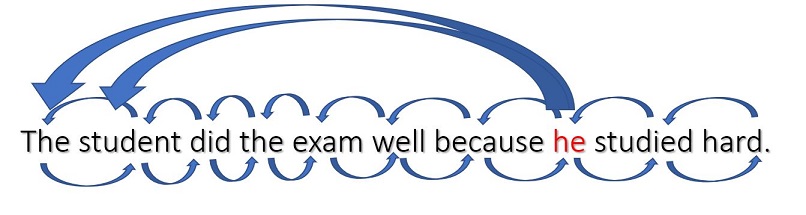

Now, the BERT algorithm has what is named Self-Attention which has made it possible to better understand a Word in its context.

As shown above, the word HE can learn NOT only from previous words but rather from any word in the context before or after it.

That’s why BERT stands for Bi-directional Encoder Representation from Transformers.

To conclude, so far BERT seems to be a new advanced approach to better understand users’ queries. It is not an update that targets pages validations or ranking. But at the same time, as this algorithm aims to better understand words in its context. The SEO specialist should prioritize user friendly approaches and not only technical or tactical ones for ranking. We are dealing with smarter Machine Learning Models so we should expect more similar updates in the future.

Please feel free to leave a comment or to Contact Me for an open discussion